The Fog Has a Product

Why "summarize this meeting" is a waste of AI, and the sentence that actually solves problems.

Every interaction with a large language model is a problem statement. The action it can take is bounded by the precision of the problem you gave it.

The Sentence That Solves the Problem

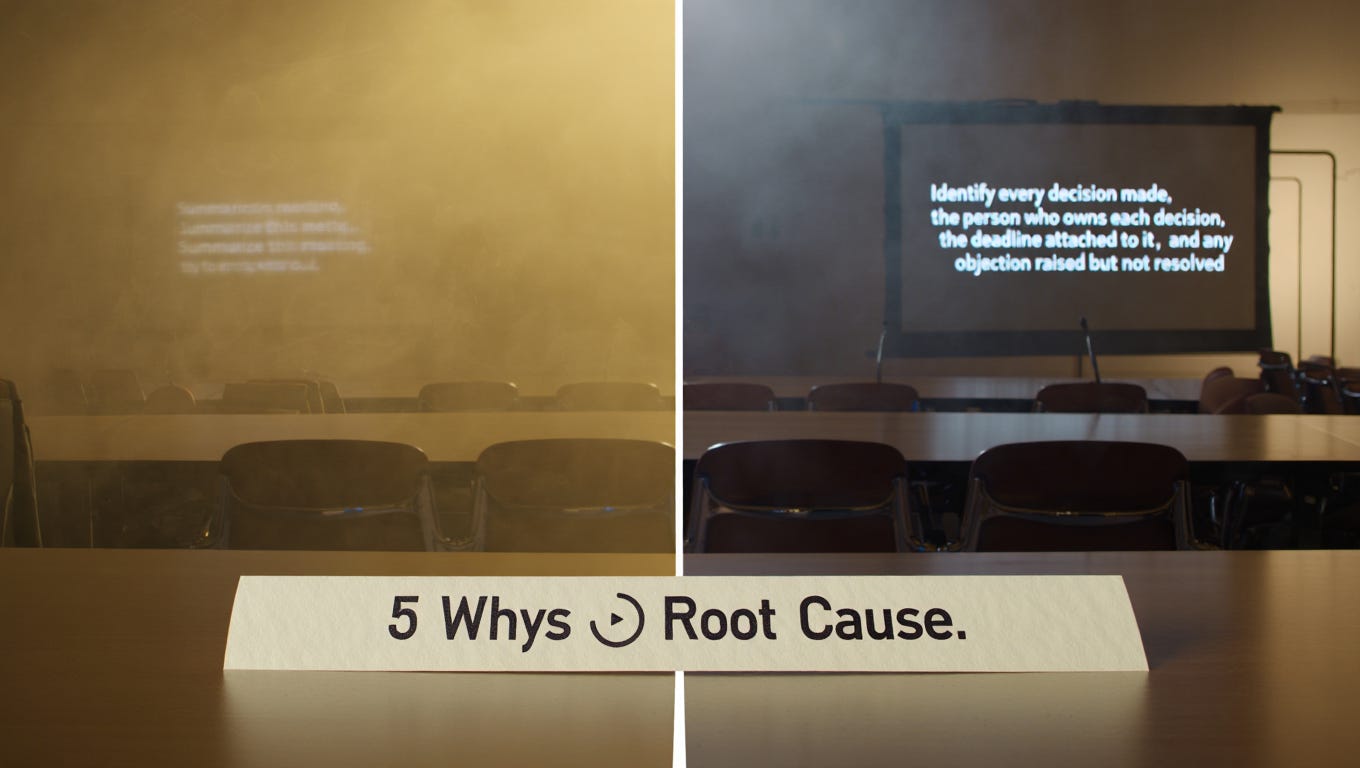

Here is a prompt that gets used, in some variation, thousands of times a day across organizations that have adopted AI tools: “Summarize this meeting.”

The output is competent, forgettable, and useless for anyone who wants to know what was actually decided, who is accountable for what, and what unresolved tensions are likely to resurface. The problem isn’t the model. The model did exactly what was asked. The problem is the sentence, a descriptive instruction that tells the system what to produce without specifying the mechanism of production, the standard of relevance, or the purpose the output needs to serve.

Compare it to this: “Identify every decision made in this meeting, the person who owns each decision, the deadline attached to it, and any objection that was raised but not resolved.”

The output from that sentence is a different category of document. Same meeting, same model, different language. The rewrite didn’t add information, it changed the structure of the request from descriptive to operational, and the gap in the outputs is the direct measure of that structural difference.

The Lineage of an Idea

In 1955, philosopher J.L. Austin delivered the William James Lectures at Harvard, later published as How to Do Things with Words (1962). Austin’s central claim was that language doesn’t only describe states of affairs, it performs actions. To promise, to warn, to declare, to instruct are not descriptions of the world but interventions in it. The sentence “the meeting starts at nine” describes. The sentence “I’m calling this meeting to order” does something.

Austin’s speech act theory was a philosophical contribution, but its practical implication is precise: the difference between a sentence that describes and a sentence that acts is not a matter of content but of structure. Descriptive sentences name states. Operational sentences specify mechanisms, assign causation, and create the conditions for action.

The Toyota Production System arrived at the same insight through manufacturing rather than philosophy. The practice of “five whys”, asking why a problem occurred, then why that cause occurred, and so on through five iterations, is a methodology for forcing language from descriptive to operational. “The line stopped” is descriptive. “The line stopped because the fuse blew because the pump was overloaded because the bearing was worn because no maintenance schedule was in place” is operational. The five whys doesn’t add information to the room. It changes the structure of how the problem is held in language, and that structural change is what makes the solution visible.

What connects Austin and Toyota is the recognition that vagueness is not neutral. Descriptive language doesn’t merely fail to solve problems, it actively preserves them by making causation invisible.

Where Vagueness Goes to Hide

There is a reason organizations default to descriptive language, and it isn’t cognitive laziness. It’s rational self-protection.

Descriptive language distributes accountability. “Sales are down” belongs to no one. “Sales are down because the team stopped following the qualification script three months ago, after the script was changed without retraining” belongs to someone, implicates a decision, and raises the question of who made it. Operational language, by making causation legible, makes accountability legible too. In any organization where the cost of visible error is high, the incentive is to keep causation in the fog.

This is why the instruction to “be intolerant of vagueness”, while correct, misdiagnoses the problem as individual rather than structural. The person who says “things aren’t working” in a leadership meeting usually knows more than they’re saying. The vagueness is a choice, not a limitation, and the choice is being made in response to an environment that punishes precision. Demanding operational language without changing the environment that makes descriptive language safe is the management equivalent of asking people to work faster without removing the obstacles slowing them down.

The structural fix is to make operational language the default format for problem statements, not a virtue expected of individuals. Toyota achieved this through the standardized A3 report, a single-page document that requires every problem to be stated as a gap between a current condition and a target condition, with a causal chain connecting them. The format doesn’t rely on individual courage. It builds operational language into the process.

The Failure Mode That Matters

Operational language has a limit worth naming, because the article that ignores it is doing the same thing it’s warning against: overstating the mechanism.

The risk of operational language is confident movement in the wrong direction. “Sales are down because the team stopped following the script” is operational, it specifies a cause and implies a solution (retrain the team, reinstate the script). But if the diagnosis is wrong, if sales are down because the product has a feature gap that the script was papering over, operational language accelerates the error. You move faster toward the wrong destination.

This is why Austin’s speech act theory and Toyota’s five whys both emphasize verification as part of the operational process. The five whys isn’t complete when you’ve named a cause, it’s complete when you’ve traced the causal chain back far enough that the root cause is structural rather than symptomatic. The A3 format requires you to distinguish between your hypothesis about causation and the evidence that confirms it. Operational language isn’t a substitute for being right. It’s a structure that makes being wrong faster to detect and correct.

The Prompt Is the Problem Statement

In 2026, the operational language skill has acquired a domain it didn’t have before, and that domain makes the stakes concrete in a way that boardroom discussions of “clarity culture” rarely do.

Every interaction with a large language model is a problem statement. The quality of the output is a direct function of the quality of the input, not its length, not its vocabulary, but its structure. The person who writes “help me with this project” and the person who writes “identify the three assumptions in this proposal that are most likely to fail under adverse market conditions, and for each one, describe the evidence that would confirm or disconfirm it before we commit capital” are making the same request in fundamentally different languages. The gap in their outputs, compounded across every AI-assisted decision in an organization, is a competitive gap.

This is not a new insight dressed in new technology. It is Austin’s insight, and Toyota’s insight, running on a different substrate. The person who cannot write an operational problem statement cannot write an effective prompt. The skill is identical because the underlying structure is identical: you are asking an intelligent system, human or artificial, to act on a problem, and the action it can take is bounded by the precision of the problem you gave it.

What changed in 2026 is that the inability to write operational language is now measurable in real time, in every output a team produces with the tools it’s paying for. The fog of descriptive language used to be invisible. Now it has a product.

Lights On publishes weekly on technology strategy, economic frameworks, and the decisions shaping how businesses and systems actually work. Subscribe

Sources:

https://pure.mpg.de/rest/items/item_2271128/component/file_2271430/content.

https://www.orcalean.com/article/how-toyota-is-using-5-whys-method

https://www.refontelearning.com/blog/prompt-engineering-optimizing-interactions-with-language-models-2026-guide

https://en.wikipedia.org/wiki/J._L._Austin#How_to_Do_Things_With_Words

Thanks Farida

I’ve learned so much about LLM’s in this - so I’m going to reengineer the prompts to ease my crazy meeting and priority strategy running Raindance

But of more interest is how I can implement your ideas in my parallel career of filmmaker and storyteller

I think with a very few tweaks your learnings could be implemented for character study and story structures