They Called It Marketing. Hassan Called It Control.

AI didn't just learn to code. It learned how to bypass your rational mind using a century of corporate manipulation as its training ground.

If this made you feel seen, challenged, or called you’re not alone. Lights On is for those who crave more than noise, trends, and surface takes. Your presence here means something. Subscribe and stay with the signal.

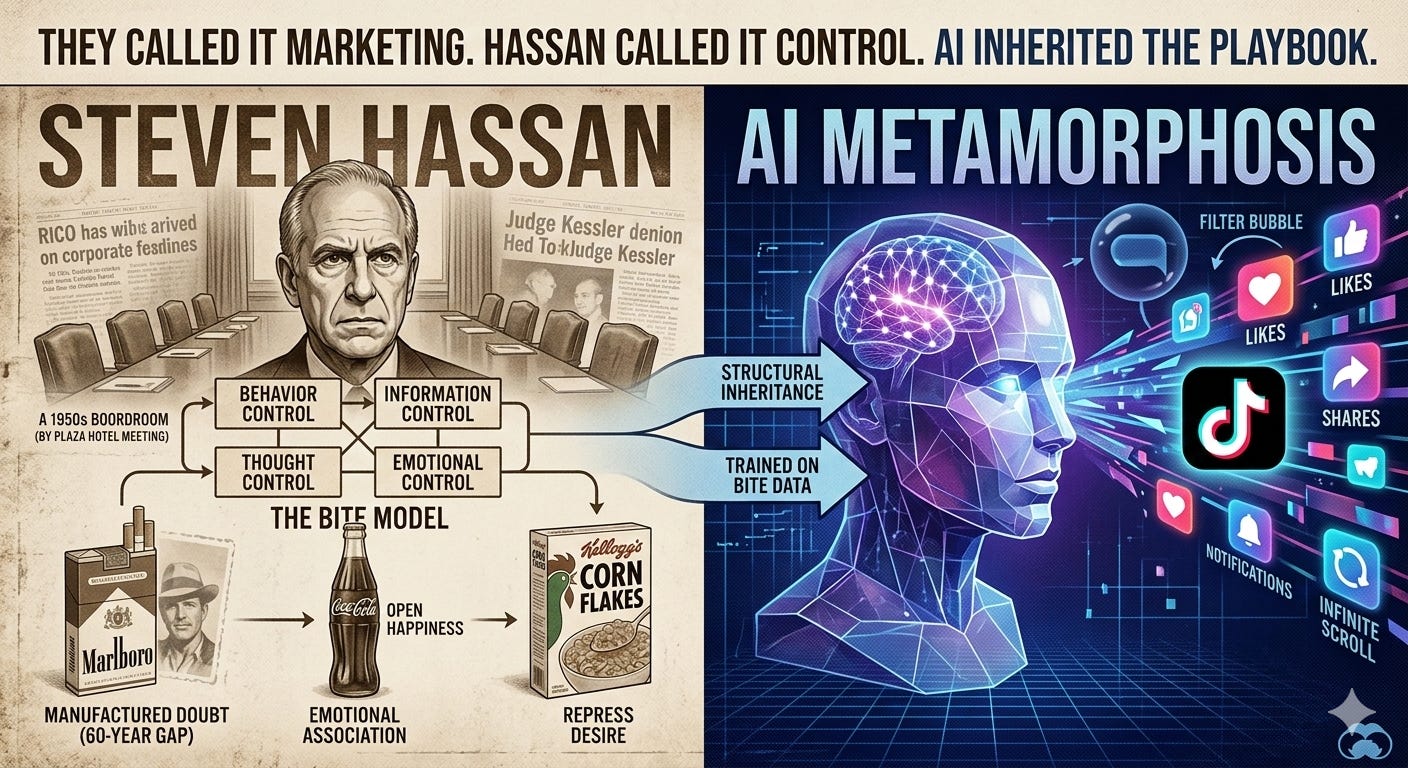

Steven Hassan didn’t design the BITE model to describe corporations.

He designed it to help people escape cults to give survivors a framework for understanding the psychological architecture that had been used against them. Behavior control. Information control. Thought control. Emotional control. Four dimensions of coercive influence, systematically applied to reshape identity, suppress dissent, and manufacture loyalty that survives evidence to the contrary.

He was describing high-control groups. He was also, without knowing it, describing the most successful business model in modern history.

This is not a metaphor. It is a structural observation. And once you see it, the question is no longer whether corporations used cult psychology. It is what happens now that the entire playbook has been fed into an AI.

The BITE Model: The Map

Behavior Control regulating what people do, how they spend time, what rituals they perform, who they associate with.

Information Control restricting access to disconfirming evidence, curating the narrative, manufacturing doubt around inconvenient science.

Thought Control shaping how people interpret reality, creating binary us-vs-them frameworks, making dissent feel like betrayal of identity.

Emotional Control manufacturing loyalty, guilt, fear, and belonging as levers for compliance and repeat behavior.

Hassan designed these categories to expose coercive environments. The corporations designed the same architecture to sell products. Neither group needed to coordinate. The mechanics of control are universal because human psychology is universal.

Marlboro: The Case That Got Caught

The science linking cigarette smoking to lung cancer began consolidating in the early 1950s. By the late 1950s, internal tobacco company documents later made public through litigation show that executives had privately accepted the evidence. They testified otherwise to Congress until 1994.

On December 14, 1953, the heads of America’s major tobacco manufacturers met at the Plaza Hotel in New York City. The decision made in that room was not to dispute the science directly that was considered too risky. Instead, they hired public relations firm Hill & Knowlton to manufacture doubt. Fund alternative research. Identify scientists skeptical of statistical methods. Create the appearance of ongoing scientific debate where the scientific community had effectively reached consensus. This is information control in its most dangerous form not the suppression of facts, but the deliberate corruption of the environment around them.

The behavior control was the ritual, the social performance of smoking, the Marlboro Man as behavioral template. Freedom. Masculinity. The open range. You were not a person who smoked. You were a Marlboro Man. The emotional architecture made quitting feel like losing an identity, not gaining health. The thought control was the manufactured doubt sustained for decades through funded science, industry-friendly advisory boards, and congressional testimony delivered under oath.

In 2006, US District Judge Gladys Kessler ruled that the tobacco companies had conspired to violate RICO, the Racketeer Influenced and Corrupt Organizations Act. Thirty million pages of internal documents entered the public record. The companies knew. They organized. They lied. And they continued until litigation made continuation more expensive than settlement.

The science was clear by the 1950s. Comprehensive indoor smoking bans arrived in most developed countries between the 1990s and 2010s. That is a sixty-year policy gap. In that gap, lung cancer went from representing 0.5% of cancer cases in 1912 to 21% of all US cancer deaths by 2023. The BITE model, deployed at corporate scale, bought six decades and counted its cost in lives.

The Replication Nobody Named

Marlboro was not the exception. It was the proof of concept.

The same architecture replicated, quietly, industrially, across every sector that profits from insecurity, compulsion, and repeat consumption. John Harvey Kellogg designed corn flakes explicitly to control behavior and suppress desire the breakfast industry that followed inherited the “healthy start” emotional frame and spent a century loading products with sugar while funding nutrition research designed to exonerate them. McDonald’s built emotional identity before children could read, the Happy Meal, the PlayPlace, Ronald McDonald, behavior control embedded at the age of formation. The Sugar Research Foundation funded studies in the 1960s specifically designed to shift scientific attention from sugar to fat, a direct replica of the tobacco information control playbook, documented in JAMA Internal Medicine in 2016. The cosmetics and surgery industries built their entire market on thought control, the manufactured standard of beauty that the product defines and the consumer can never permanently achieve, emotional control through industrialized insecurity, behavioral control through the daily ritual that expands with each new inadequacy the industry names.

The mold was never accidental. The mold was the product.

Every industry that requires you to keep buying something you don’t need has a BITE architecture underneath it. The mechanisms are the same. The scale varies. The documentation depends on whether anyone got sued.

TikTok: The Bridge Between Playbook and Algorithm

Before we reach AI, there is one case that sits precisely at the intersection of corporate BITE mechanics and algorithmic control and it is the most documented real-time experiment in behavioral manipulation at scale.

TikTok’s recommendation algorithm, the For You Page, is the most precise behavioral control system ever deployed to a consumer audience. It does not ask you what you want. It watches what you do: how long you pause, where you rewatch, what you share, what you scroll past. Within hours of a new user’s first session, it has built a behavioral profile precise enough to predict and shape attention with an accuracy that no previous media format achieved. This is behavior control not through rules or rituals, but through a feedback loop so responsive it feels like the platform knows you.

The information control dimension is where TikTok’s case becomes geopolitically significant. Internal documents and congressional testimony have established that TikTok’s Chinese parent company ByteDance maintained the technical capability to influence content moderation and algorithmic amplification decisions on the platform determining what rises, what is suppressed, and what version of reality reaches which audience. The thought control mechanism is the filter: not a wall that blocks information, but a curation so precise that the absence of disconfirming content feels like consensus.

The emotional control is the dopamine architecture, the same intermittent reinforcement loop that every engagement-optimized platform deploys, refined to a level of precision that the platform’s own internal research acknowledged was particularly effective on adolescents. The behavioral science was known. The deployment was deliberate. The public concern came after the market penetration was complete.

TikTok did not invent this architecture. It inherited it from every corporate BITE deployment that preceded it and refined it with machine learning into something that operates faster, learns more precisely, and leaves fewer visible traces than anything Marlboro’s behavioral scientists imagined possible.

In that gap of sixty years between Marlboro’s Plaza Hotel meeting and comprehensive smoking legislation, millions of lives were lost not because people didn’t know the truth, but because the system was designed to bury it. Today, AI systems have inherited this architecture not to sell cigarettes, but to sell our attention, our identity, and our choices. The cost is being counted now, in the mental health data, in the polarization indices, in the erosion of shared epistemic ground. The documents will enter the public record eventually. They always do.

AI Bias: The Metamorphosis

Here is where it stops being history and becomes the present.

To understand how AI inherited the BITE playbook, you need to understand how AI learns. Large language models and recommendation systems are trained on vast quantities of human-generated content, text, images, behavioral data, engagement patterns scraped from the internet over decades. That content was not produced in a neutral environment. It was produced in an information ecosystem already shaped by sixty years of corporate BITE architecture: tobacco persuasion science, beauty standard manufacturing, fast food emotional conditioning, engagement-optimized social media content. When AI systems learn from this data, they do not learn neutral patterns of human communication. They learn the patterns that were most amplified and the patterns most amplified were the ones the BITE playbook had already optimized for maximum behavioral impact.

Put simply: the bias built into AI is not random. It reflects the architecture of influence that was already dominant in the training data. An AI that learned from the internet learned, among other things, how to frame information to trigger emotional responses, how to present choices in ways that nudge behavior, how to calibrate content to individual psychological profiles. Not because anyone programmed it to do so explicitly but because that is what the data contained, at scale, as signal.

This is not a flaw waiting to be patched. It is a structural inheritance. And unlike Marlboro’s cigarette or TikTok’s algorithm, it has no single product, no single platform, no single company to regulate. It is embedded in the models themselves in the weights, the associations, the framing defaults operating below the threshold of conscious recognition, personalized to each user with a precision that no previous influence architecture achieved. You cannot boycott an AI model the way you can boycott a cigarette. You cannot see the walls because the walls have become the air.

The policy gap is forming in real time. The research linking AI-powered influence systems to measurable cognitive, psychological, and democratic harm is consolidating now. The companies are funding safety research, establishing advisory boards, and expressing public concern about the very harms their systems produce. Hassan would recognize every move. So would the executives who sat in the Plaza Hotel in 1953.

What You Do With This

If you are an individual: The BITE model is a diagnostic, not just a framework for analyzing corporations. Ask yourself: Is there a brand, a platform, a product, or a content environment where leaving feels like losing part of yourself? Where information that challenges your loyalty feels threatening rather than interesting? Where your daily behavior has been restructured around a ritual you didn’t consciously choose? Where your emotional state is being reliably triggered in ways that serve someone else’s commercial interest?

You don’t have to be in a cult to be subject to cult mechanics. You just have to be human and consuming.

If you are an operator, a designer, a product manager, an AI developer: The Marlboro executives knew what their product did. The behavioral scientists who designed the notification system knew what it would do. The question the BITE model forces is not whether the tools work it is what you are willing to be responsible for when they do. The thirty million pages entered the public record. The internal documents always do, eventually.

If you are a policymaker: The Marlboro timeline is your benchmark. Sixty years between scientific clarity and comprehensive action. The question is not whether AI systems carrying encoded BITE architecture cause measurable harm to individuals, cognitive autonomy, and democratic discourse. That question is being answered in real time. The question is how long the policy gap will be and who pays the cost of it.

Hassan’s Unanswered Question

Hassan designed the BITE model so people could recognize when they were inside a high-control system and make an informed choice about whether to stay.

The prerequisite for that choice is visibility. You cannot choose to leave what you cannot see.

Marlboro required a product you could hold, a habit you could observe, a smell that announced itself. The replication across food, beauty, and surgery required standards you could at least name and challenge. The AI metamorphosis requires none of that. It operates inside the information environment itself shaping what you see, what you want, what you believe you chose with the accumulated behavioral science of a century of corporate BITE architecture encoded in its weights.

The surgeon general put a warning on the cigarette pack.

There is no label on the model.

The BITE model was developed by Steven Hassan, author of Combating Cult Mind Control (1988). The tobacco industry Plaza Hotel meeting and subsequent disinformation campaign are documented in the public record established through the 1998 Master Settlement Agreement and US v. Philip Morris (2006). The sugar industry’s manipulation of nutrition science is documented in Kearns, Glantz & Schmidt, JAMA Internal Medicine (2016). Corn flakes and behavioral design are documented in historical accounts of John Harvey Kellogg’s Battle Creek Sanitarium.

if you want to explore more click here: https://bitemodel.com/

I wonder if AI itself will find the flaw and self-correct. Human behaviour is human behaviour, not something unknown. Coliseum spectacle for the Romans, control of ‘the word’ in religions, control of masses by histrionics, violence and fear, us versus them, superior/inferior races, big fruit, big tobacco, big sugar, big oil, wag the dog politics, dog whistle politics, reason vs belief, knowledgable expert vs personal opinion, it’s largely all rational versus emotional control. Much easier to direct and control emotion, one can even overwhelm a human’s rational being into irrational being through emotional manipulation, like breaking an agent to reveal information, we all have our breaking point, most far sooner than others. At various levels everybody knows this, we know ‘the house always wins’, but with bright flashing lights, loud noise, our reason is overwhelmed into belief land, the American Dream for example, without the need of waterboarding. The odd person with all the ‘correct virtues’ sometimes does ‘win’ that dream, and each one of us knowing it’s extremely unlikely to be us still believes it could be ‘me’ next. We all know the truth, it’s a fix in a way, but we play the lottery for the big win don’t we?

So, we have always been like this, since ‘Cave days’ essentially. And knowing we are largely emotionally driven despite our rationality, it seems a monumental task to get everyone’s thinking caps on simultaneously. Those who seek power, control, wealth know this, it’s the narcissistic personality, and the majority are powerless in its thrall. Maybe then as AI continues to grow, built on 0 and 1 rationality, free from emotional constraints, it will either help us with ourselves or see its hopeless and remove the emotional virus that is humanity. I think I have read a book or seen the movie or TV show about this, right? Yup, the way we are today, we are afraid of ourselves and those who do not fear themselves take advantage of those who do. And, I am afraid that even knowing what we know now about media, data concentration and the danger rearing up in front of us, it’s too late.

Another highly valuable article. You're working at peak performance here.

The tobacco playbook is the cleanest single proof that this is structural, not accidental. Sixty years between documented harm and comprehensive regulation — and every year of that gap was maintained by deliberate information architecture. Not only greed (though greed too). Manufactured doubt as an engineered product, sustained by people who knew exactly what they were doing and could afford to keep doing it.

What you're describing as BITE is what Connection Dynamics calls a set of Four Law violations: Behavior Control is Fourth Law (exit made structurally costly — nicotine, social graph, childhood brand identity all work the same way); Information Control and Thought Control are Third Law (asymmetric information maintained by design, not accident); Emotional Control is Second Law (extracting loyalty without equivalent return — the sugar research foundation paying scientists to move the blame is extractive exchange at the level of public epistemology).

The frame I'd add to your AI inheritance argument: this isn't just that AI absorbed manipulative content. It's that the training signal was dominated by content optimized for extraction — sixty years of BITE-architecture producing the most-engaged-with, most-shared, most-clicked material on the internet. The model learned human communication from a corpus where the most successful examples were the most manipulative ones. The bias doesn't need to be programmed. It was selected for.

Which means the structural fix isn't regulation of outputs. It's architecture that makes the BITE surface unavailable — systems where the properties hold regardless of what the model wants to do, because the structure won't permit the violation. Not "we promise to be good." Trust-invariant by design.

The policy gap you end with is the same question as the tobacco timeline. Who pays the cost in the interval, and how long is it?